"Algorithmic Flower"

CONCEPT

To take the projects I had done in this class so far to another level and also inspired by Gabe's suggestion to incorporate data, I decided to combine my interactive Jitter patch and video mapping projects into a new project where the sound and visuals are controlled randomly by data.

PROCESS

First I made a Max/Jitter patch that plays MIDI notes and selects colors based on an algorithm:

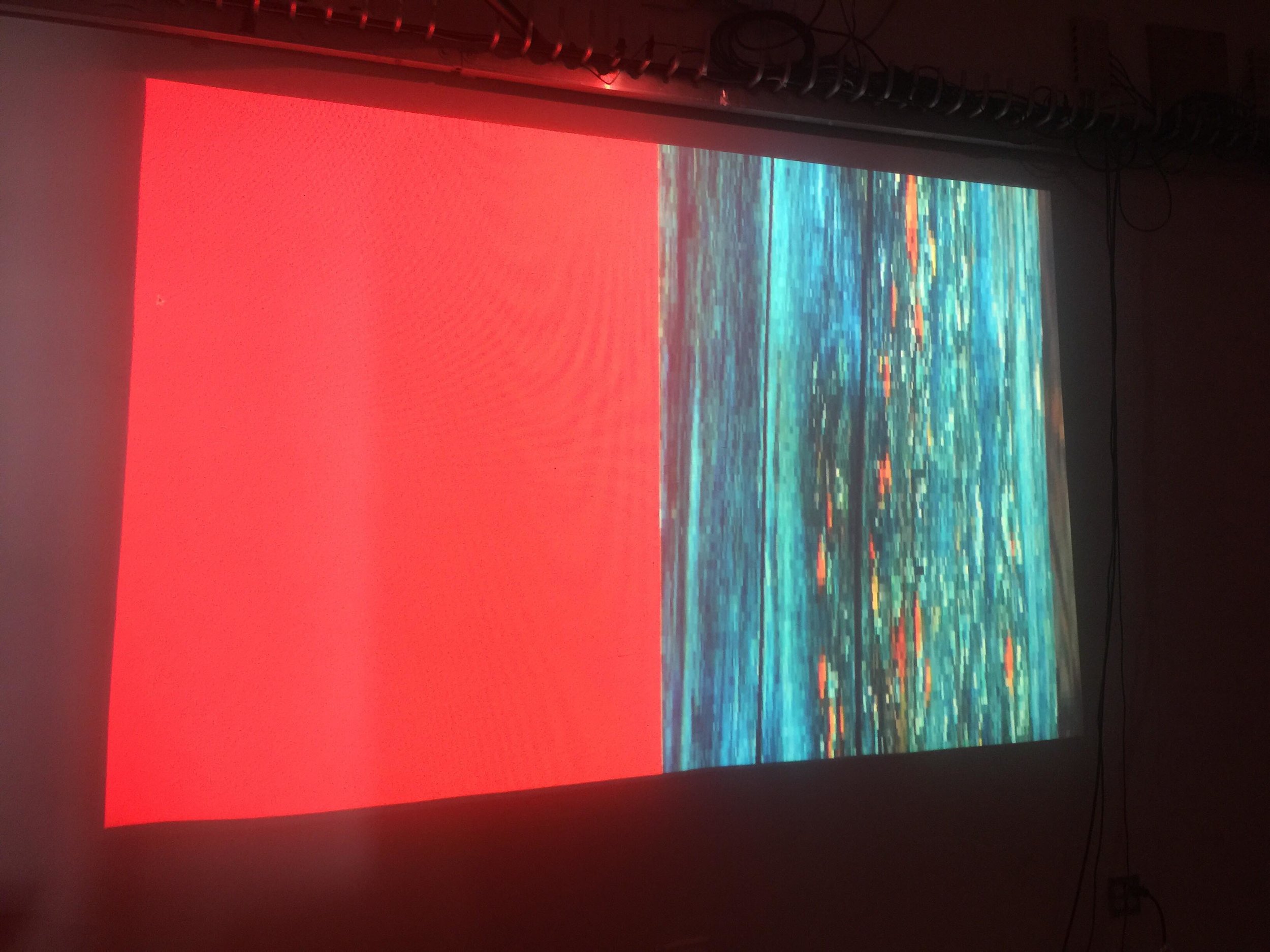

For the sound, I sent the MIDI data to Logic, where it automatically triggered notes on a software synthesizer. For the visuals, I used Syphon to pipe the colors being automatically generated from the Max/Jitter patch into MadMapper, where I assigned the colors to many circles or "petals" in rings. The first ring closest to the center is the original color coming from Jitter; the second two rings are inverted from the first then adjusted slightly to be different shades. For the center of the flower, I used the Chrome extension Fatkun Batch Downloader to collect the images from the first page of an image search for "wildflower." In MadMapper I set the images in a folder to be triggered by the audio input from Logic, which controlled the speed at which the center flips through the images. Between notes it stops and rests on a random image from the collection. For the presentation, I projected the flower onto a wall with the center mapped onto a thick panel of circular wood, with the "petals" radiating out around it.

DOCUMENTATION VIDEO

REFLECTION

Through working on this project I learned how to make an algorithmic Max patch to control sound and video with MIDI, and how to send video/images and MIDI data to MadMapper. There are many possibilities that could stem from this.